Managing and refining prompts is a crucial part of optimizing Large Language Model (LLM) interactions. As prompts evolve with iterations and improvements, it becomes essential to keep track of changes, test different versions, and revert to previous ones if needed. Prompt versioning in Athina AI allows you to systematically manage your prompt iterations, ensuring consistency, collaboration, and ease of experimentation. This guide will walk you through the importance of prompt versioning, how it works, and its implementation using Athina’s Prompt Playground.Documentation Index

Fetch the complete documentation index at: https://docs.athina.ai/llms.txt

Use this file to discover all available pages before exploring further.

Why Do We Need Prompt Versioning?

Prompt versioning is a system that automatically assigns a version number to each saved change of a prompt template. Managing prompts manually can become cumbersome, especially when multiple iterations are tested. Prompt versioning ensures:- Traceability: You can see when and how a prompt changes.

- Flexibility: Easily switch between versions to compare effectiveness.

- Collaboration: Teams can work on the same prompt while maintaining version control.

- Reliability: Prevents accidental loss of a well-performing prompt by allowing easy rollbacks.

Prompt Versioning in Athina AI

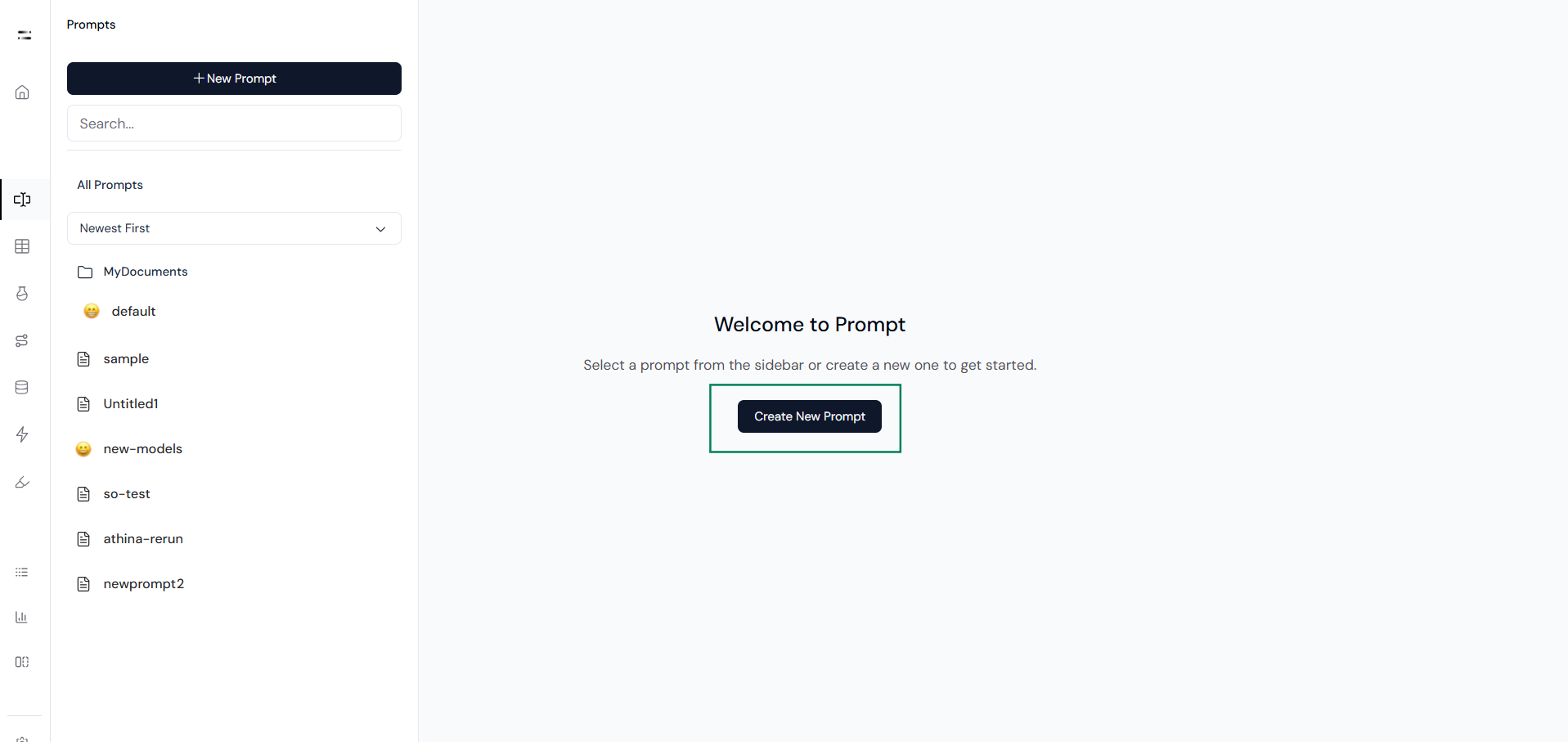

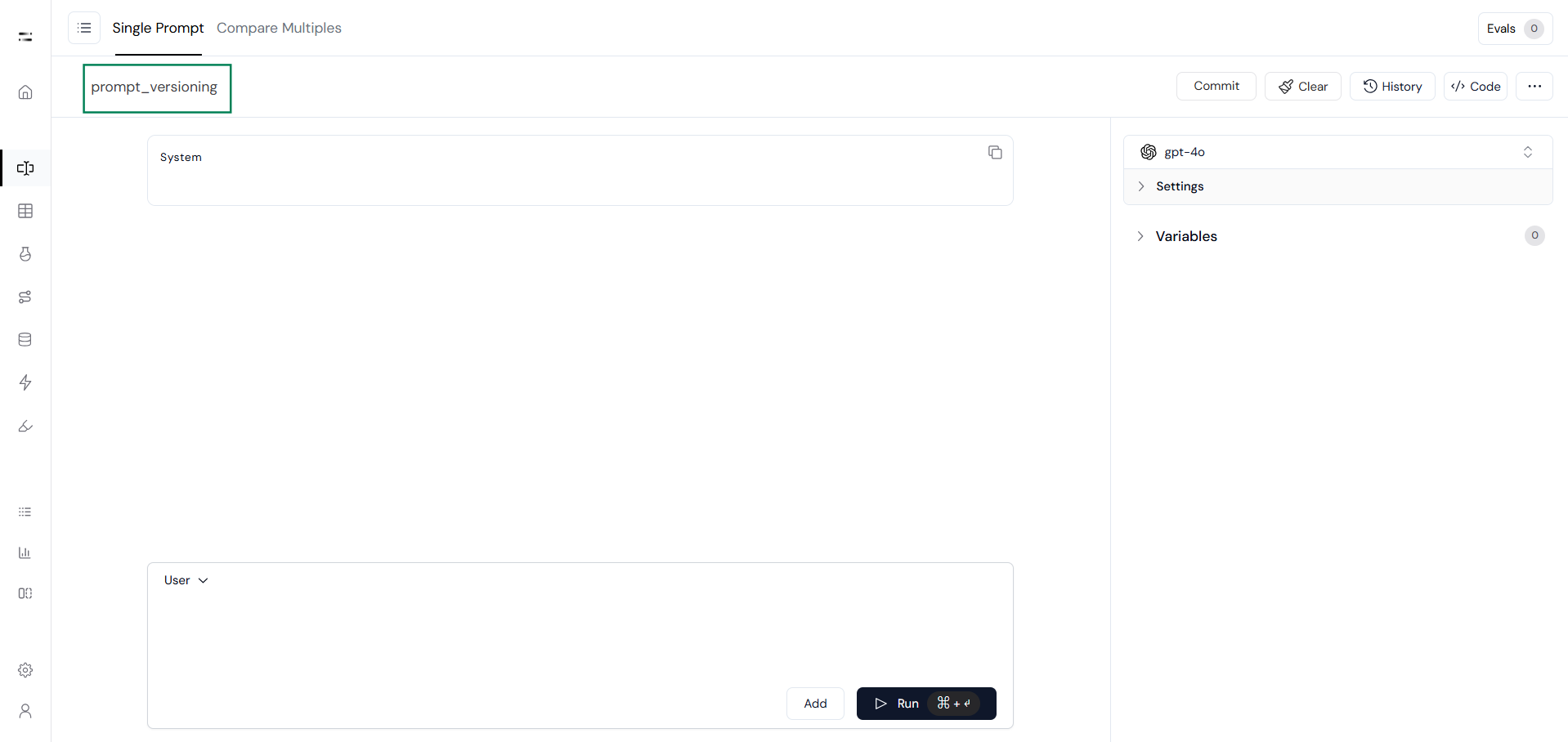

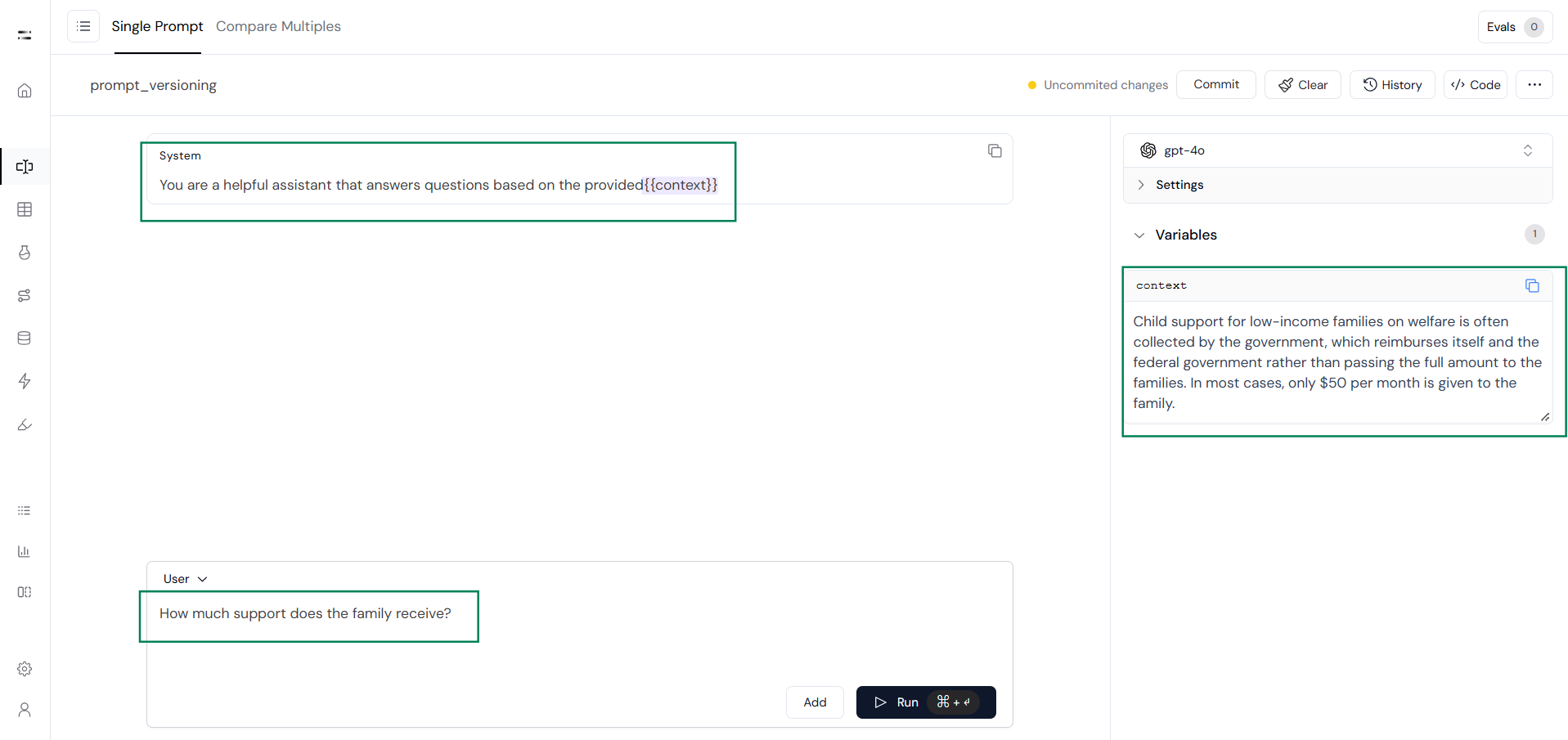

Now let’s see step by step how to version prompts:Step 1: Create a Prompt

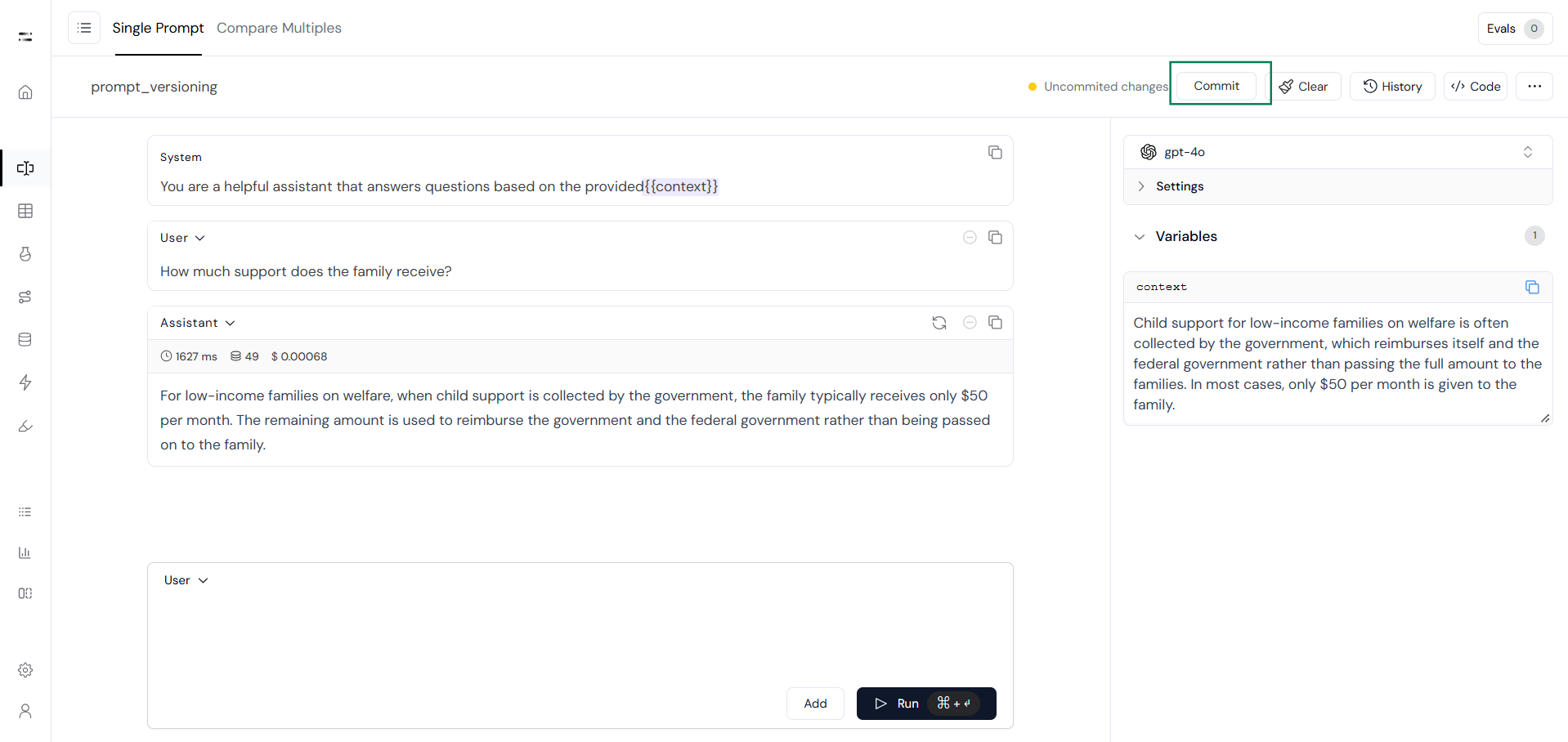

Step 2: Test the Prompt

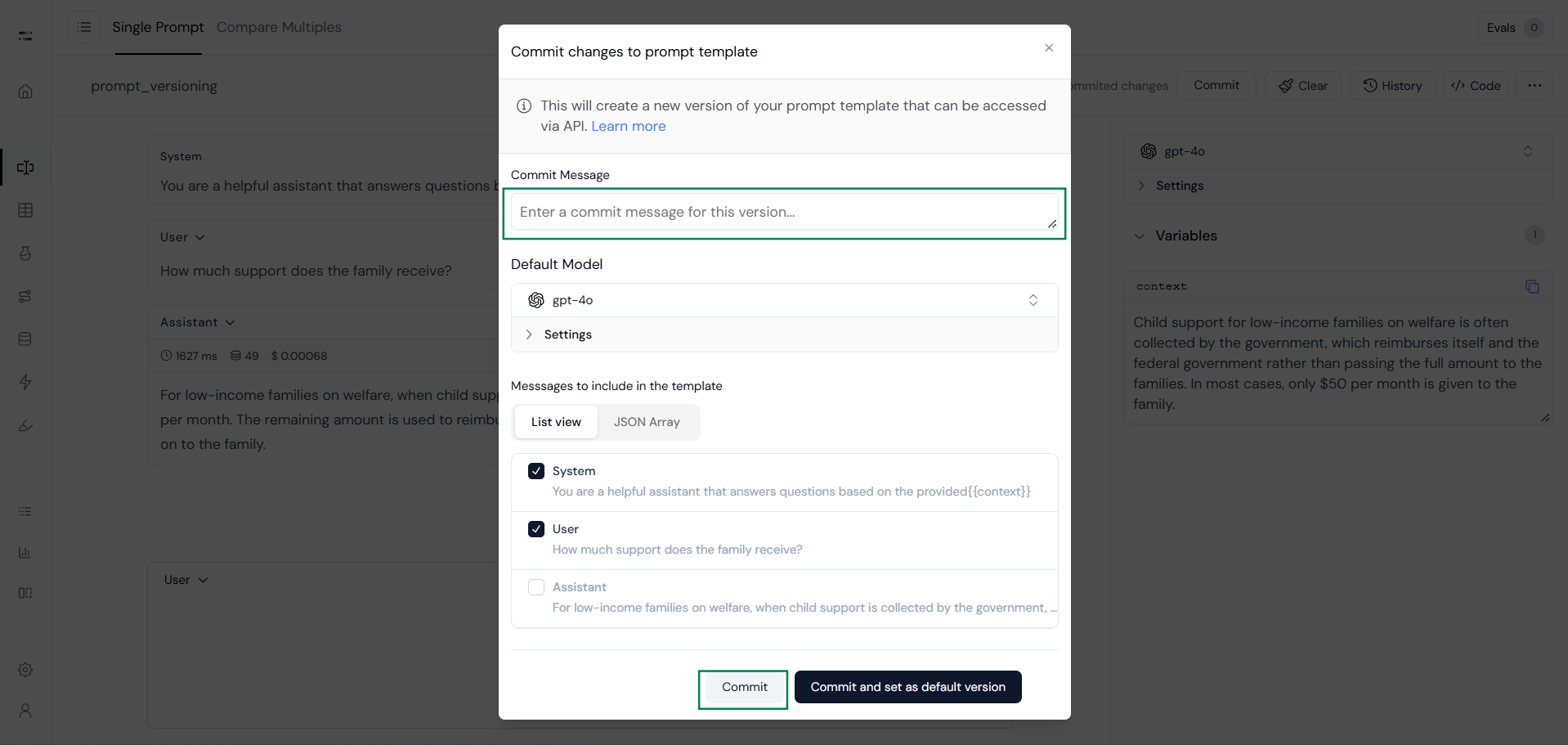

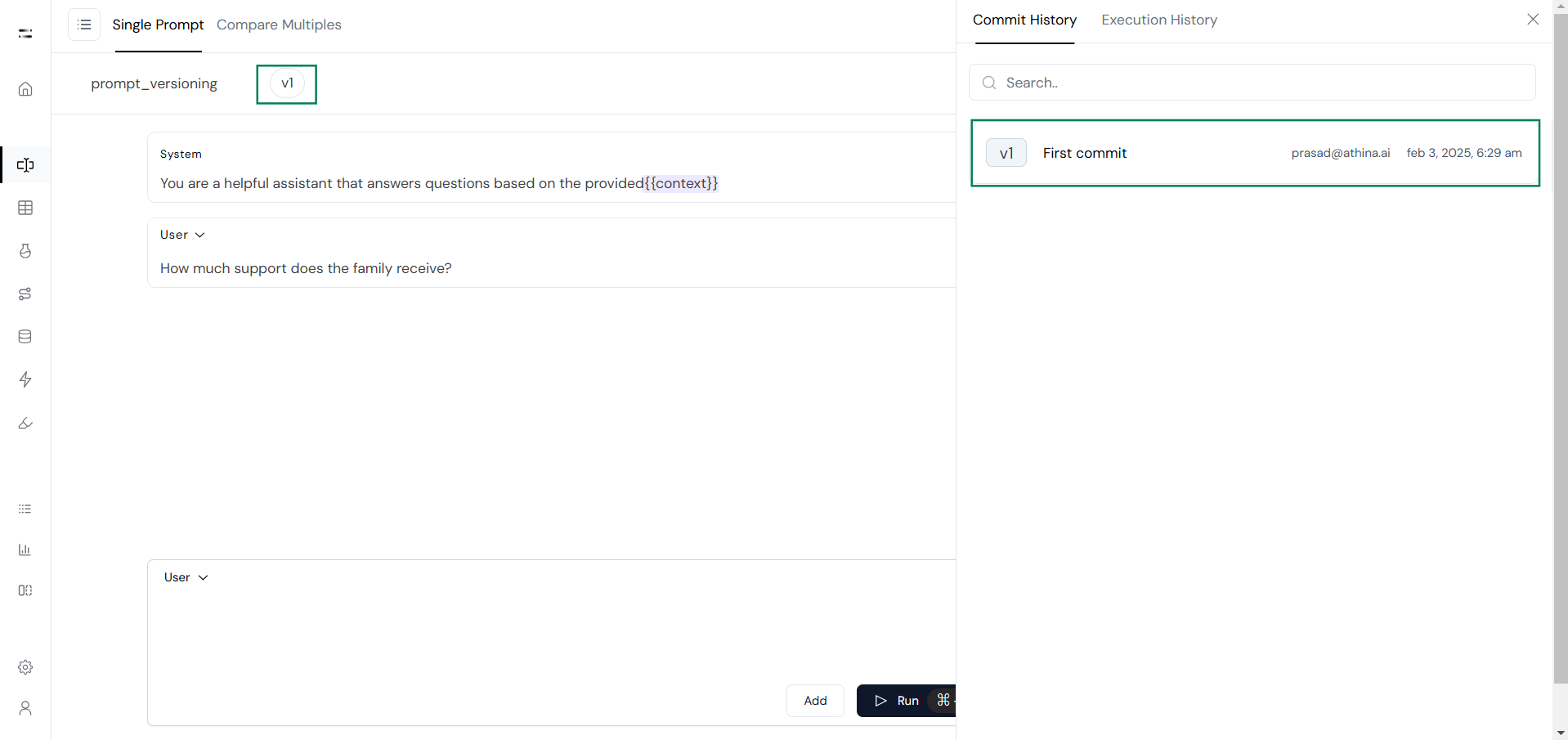

Step 3: Commit a Prompt Version

Prompt versioning in Athina AI provides a structured way to manage, track, and optimize prompts over time. By maintaining version history, users can experiment, revert to previous versions when needed, and collaborate seamlessly with their teams. With version tracking, default version settings, and rollback capabilities, they can confidently iterate on prompts while ensuring that well-performing versions are preserved and easily accessible.